A quarterly territory review should review the territory, not the rep. The rep’s number is the output of the system. The territory design is the input. Most reviews invert that order and end up running a quota inspection wearing a different name on the calendar invite.

The meeting most sales organizations call a QBR is a forum where a rep defends a number. The review the territory itself needs is a system-level read on the design that produced the number, asking if it is still aligned with the accounts inside it, the geography around it, and the competitors moving through it. Different meeting, different attendees, different output, different decisions.

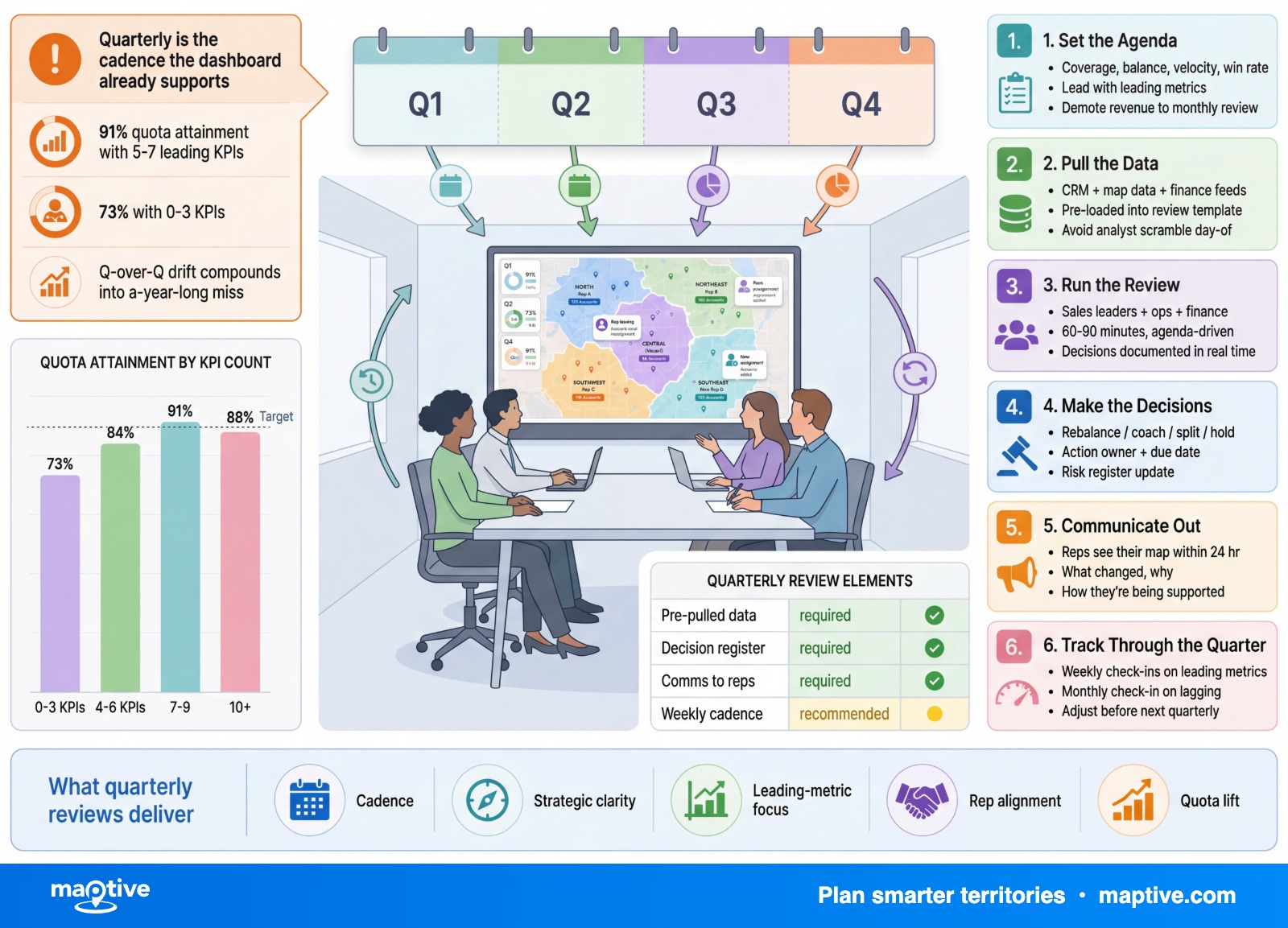

The six-step framework below treats the territory as the entity under review. The rep enters as a qualitative source after the structural diagnostic, not before. The order is the framework.

The Reframe From Rep Review to Territory Review

Most teams confuse two questions and answer neither cleanly. Is the design right. Is the operator right. Both matter, both have to be asked, and they have to be asked in that order, because the operator’s number cannot be read without first reading the system the operator works inside.

Sales Management Association research with Xactly found that 58% of firms do not consider their territory design effective, a figure restated in 2024 coverage as only 36% finding it effective. Read it twice. Almost two thirds of sales organizations have looked at the structure that produces their revenue and concluded it does not work, and then asked their reps to hit numbers inside it. The number is a design problem most teams treat as a hiring problem.

The conflation happens because quota attainment is easy to pull, bonus-linked, and personal. A rep number arrives in the inbox every Monday. A territory-level diagnostic requires pulling pipeline coverage by region, deal velocity by segment, win-rate dispersion controlled for tenure, and competitor activity by polygon, none of which has a single owner in most sales orgs. The rep number wins by default.

The cost shows up two quarters later. A rep on a structurally broken territory gets coached, placed on a plan, or replaced. The replacement produces the same number nine months in because the design produced the number, not the seat. The reframe is one sentence. Review the territory first, review the rep second, act on both with the diagnoses kept separate.

Step 1: Pull the Standardized Territory Snapshot

The snapshot has to be identical in shape across every territory before any comparison is possible. Step one collapses in most reviews because each manager pulls a different template, and the meeting spends its first hour arguing about whose pipeline number counts and which stage definition Salesforce is currently using.

The minimum required fields are five. Account list with revenue and deal stage. Current pipeline by stage. Headcount, including ramped and unramped. Last-quarter close rate. Drive-time totals for any field-based team.

Assign one owner per data slice a week before the meeting and name the owner in the calendar invite. Pipeline coverage belongs to sales ops. Account composition belongs to the territory manager. Drive-time belongs to whoever runs the mapping layer. Each owner ships their slice 48 hours before the review in the agreed format. The shipment is the deliverable, not a verbal report inside the meeting.

The output of step one is a single table. One row per territory, identical columns. If that table cannot be produced, the review cannot run.

Step 2: Compute the Leading Health Metrics

Revenue is a lagging indicator. By the time the revenue number arrives, the deals are closed and the period is over. A review built on revenue alone arrives at the diagnostic one or two quarters after the design problem started producing it. Step two is where the framework reaches for indicators that move first, while there is still room inside the cycle to act.

Five metrics carry most of the diagnostic weight. Compute them per territory, with consistent definitions, and bring them to the meeting already computed.

Pipeline Coverage Ratio by Territory

Coverage is the value of pipeline divided by the quota it has to cover. B2B SaaS organizations sit comfortably in the 3-5x range. New territories or new product lines run higher, often 5-7x. A territory at 2x while peers sit at 5x is a demand-generation signal, not a rep-effort signal.

Conversion Rate by Stage

Stage-by-stage conversion has to be compared to the system median, not to a static benchmark. A 15% gap at the same stage across multiple reps inside one territory is not a coaching problem. It is a stage-specific problem the territory design either creates or fails to compensate for.

Deal Velocity and Win Rate

Velocity slowdowns inside a single territory often track a competitor entering the polygon or an ICP drift. Win rate read in isolation conflates rep skill and territory mix; win rate read by deal size separates them.

Activity-to-Outcome Ratio

Two reps making the same number of calls and producing different pipeline values are working in two different account densities. Activity-to-outcome is the cleanest way to surface that asymmetry. When it shows up, frame it as a territory question first.

Step 3: Compare to Prior Quarter and to System Mean

A territory looked at in isolation tells the wrong story most of the time. The same coverage number looks like a crisis or like an average performance depending on what the peer territories did the same quarter. The double comparison is what makes the review diagnostic rather than anecdotal.

For every metric, compute two deltas. Versus prior quarter, which gives trajectory. Versus peer median, which gives relative position. A territory that dropped 12% on coverage while the rest of the system dropped 14% has been pulled by the market, not by the design or the operator. A territory that held flat while peers grew 18% is structurally underperforming and the flat reading is hiding it.

Define the acceptable band before the meeting, not during it. plus or minus 20% of the system mean is the working default. Anything outside the band is flagged for explanation, not judgment. Flagging is a status, not a verdict.

Watch for the bimodal team. A sales organization where a third of territories are 50% over the band and a third are 50% under it averages out to a healthy mean, looks fine on the summary slide, and is structurally broken underneath. SMA research finds organizations effective at territory design are 14% more likely to hit their sales goals, and the gap between effective and ineffective territory planning is roughly 30 points in attainment.

Step 4: Flag Drift and Generate Territory-Level Explanations

A flagged territory needs a territory-level explanation before the rep enters the room. The order is structural, not procedural. Bringing the rep in first reverts the meeting to a quota inspection because the rep’s only available defense is to explain the number.

Four drift causes have to be tested by the data first. Account composition change, meaning wins, losses, expansions, and contractions inside the territory’s account list. Firmographic or ICP drift, where the underlying buyer profile has moved. Drive-time growth, for any team whose work happens in the field. Competitor activity inside the territory’s geography or vertical.

The drive-time variable is the one most often skipped. For field-based teams, every 30 minutes of saved drive time per day works out to roughly 12 extra selling days per year per rep. Traffic patterns change, account locations change, rep home bases change, and the drive-time map computed at the start of the year is rarely the drive-time map at the end of Q3. A recompute belongs in every quarterly cycle for any team that drives to its accounts.

After the data has produced a territory-level read, the rep joins. The contribution at this point is qualitative information the system cannot pull. Which named accounts have been bought, which are in budget freeze, which competitor showed up at the last three deals.

Step 5: Decide the Action With a Four-Option Tree

A four-option decision tree prevents the two failure modes that haunt these meetings. Doing nothing because nothing felt structural enough to act on. Or fixing everything because every territory had something off. Four discrete options keep the action proportionate to the diagnosis.

No Change

Performance is inside the band, the drivers are explained, no action is needed. This is the hardest call to make in a meeting full of leaders who want to be seen acting, which is exactly why it has to be made explicitly.

Monitor

Edge of the band, ambiguous driver. Revisit next quarter with a named metric to inspect. The named metric matters. “Watch this one” is not a decision.

Adjust

Quota tweak, account move, support add, training plan. No boundary change. Adjust is for territories where the design holds but the inputs need calibration.

Rebalance

Boundary change, headcount move, or full redesign. Finance and HR have to be in the room before this option lands or the decision gets blocked downstream when comp implications surface. A rebalance executes at the start of the next quarter, not mid-quarter.

Step 6: Document the Baseline for the Next Review

A review that produces decisions without a documented baseline produces the same review again next quarter. Without the baseline, the next cycle starts from scratch on its own data definitions and its own interpretation of normal.

The output artifact is a one-page action sheet per flagged territory. Five fields. The decision (no change, monitor, adjust, rebalance). The owner. The deadline. The success metric. The re-inspection date.

The snapshot from step one becomes next quarter’s prior-quarter comparison. The metric set from step two stays constant from one review to the next so the deltas are comparable.

The cadence is part of the framework, not a separable decision. Annual reviews are too slow. Monthly reviews are too noisy and conflate with pipeline review. Quarterly reviews catch coverage gaps roughly three times faster than annual ones.

Frequently Asked Questions

What is a quarterly sales territory review?

A quarterly sales territory review is a 90-day structured diagnostic where sales leadership, RevOps, and sales ops examine the design and health of each territory, not only the rep’s number. The review uses account composition, pipeline coverage, conversion, drive-time, and competitor data to decide if the territory should be left alone, monitored, adjusted, or rebalanced.

How often should you review sales territories?

Quarterly at minimum for fast-moving sectors like SaaS, technology, and medical sales. Biannually or annually for more stable sectors. Annual-only cycles miss too many shifts between reviews, and recent research finds quarterly reviews catch coverage gaps roughly three times faster than annual ones.

How often do most companies actually re-plan their territories?

Sales Management Association and Xactly research, originally published in 2018 and still widely cited through 2024, found the majority of companies design and plan their sales territories only once per year. Nearly 40% of those companies believe more frequent planning would add value.

What should be on a sales territory review checklist?

A complete checklist covers the account snapshot (won, lost, expanded, contracted), pipeline coverage by territory, conversion rate by stage versus system mean, deal velocity and win rate, rep activity versus outcome, drive-time refresh for field teams, firmographic and ICP drift, competitor activity inside the polygon, and action items with owners, deadlines, and success metrics.

Who should attend a quarterly territory review?

Sales leadership, sales operations, and RevOps are the core attendees. Finance is invited when the proposed action involves quota or compensation changes. HR joins when the action involves headcount or rep reassignment.

What metrics matter most in a territory review?

Pipeline coverage ratio (typically 3-5x quota for B2B SaaS, 5-7x for new territories), conversion rate by stage, deal velocity, win rate, and rep activity versus outcome. Each metric is compared to prior quarter and to system mean.

What is territory drift?

Territory drift is the gradual misalignment between a territory’s original design and current market reality, caused by account growth or attrition, competitor entry, product launches, headcount changes, or ICP shifts.

What does an effective territory review produce?

A one-page action sheet per flagged territory containing the decision (no change, monitor, adjust, or rebalance), the owner, the deadline, the success metric, and the date the territory will be re-inspected.

How long should a quarterly territory review meeting take?

Most organizations budget two to four hours for the diagnostic portion when reviewing 8-20 territories, with pre-work done in advance by named owners.