A location intelligence strategy is a business-oriented plan that defines which decisions an organization will inform with geospatial data, which platforms support those decisions, who governs the data, and how the program matures. It replaces ad hoc mapping with a repeatable framework, usually structured as eleven operational steps grouped under five widely cited program pillars covering business alignment, governance, systems, engagement, and internal capacity.

The reason this needs to be a strategy, rather than a project, sits in the regulatory record. In January 2024 the FTC issued its first prohibition on the sale of precise location data, against the broker X-Mode Social and its successor Outlogic. In December 2024 the agency followed with parallel actions against Mobilewalla, including the first ban on collecting consumer data from real-time bidding exchanges, and against Gravy Analytics and Venntel. The Mobilewalla order was finalized in January 2025, the fifth FTC action targeting sensitive location-data handling in twelve months. California’s CCPA already classifies any data identifying a person within an 1,850-foot radius as sensitive personal information, and on February 21, 2025 the state legislature introduced AB 1355, the California Location Privacy Act, proposing further restrictions. An LI strategy now requires an executive sponsor because the underlying asset is both valuable and regulated.

The remainder of this article walks through the eleven-step framework from use case discovery through measurement and scale, with notes on staffing, tooling, and the privacy obligations that sit underneath every step.

Why a Strategy Differs from Ad Hoc Mapping

Ad hoc mapping produces a single map for a single meeting. A strategy answers a different question, namely which business problems will the organization route through spatial analysis on a continuing basis, and how will that capability be funded, staffed, and governed. The distinction matters because the inputs, costs, and risks compound. A territory map drawn once in a presentation has no downstream dependencies. A territory model that drives quota assignments, expense reimbursement, and customer routing has dependencies on address quality, geocoding accuracy, CRM synchronization, privacy review, and recurring tooling spend.

Market scale gives the strategy weight. Mordor Intelligence placed the global location intelligence market at $25.06B in 2025, projected to reach $47.09B by 2030. Grand View Research estimates 2024 spending at $21.21B with a 16.8% CAGR through 2030. North America accounts for 38.6% of the total. Retail held 24.54% of category spend in 2024, and 98% of retail CEOs surveyed the same year called location intelligence central to their business goals. Geospatial analytics adoption in retail and logistics rose 49% in 2024 alone.

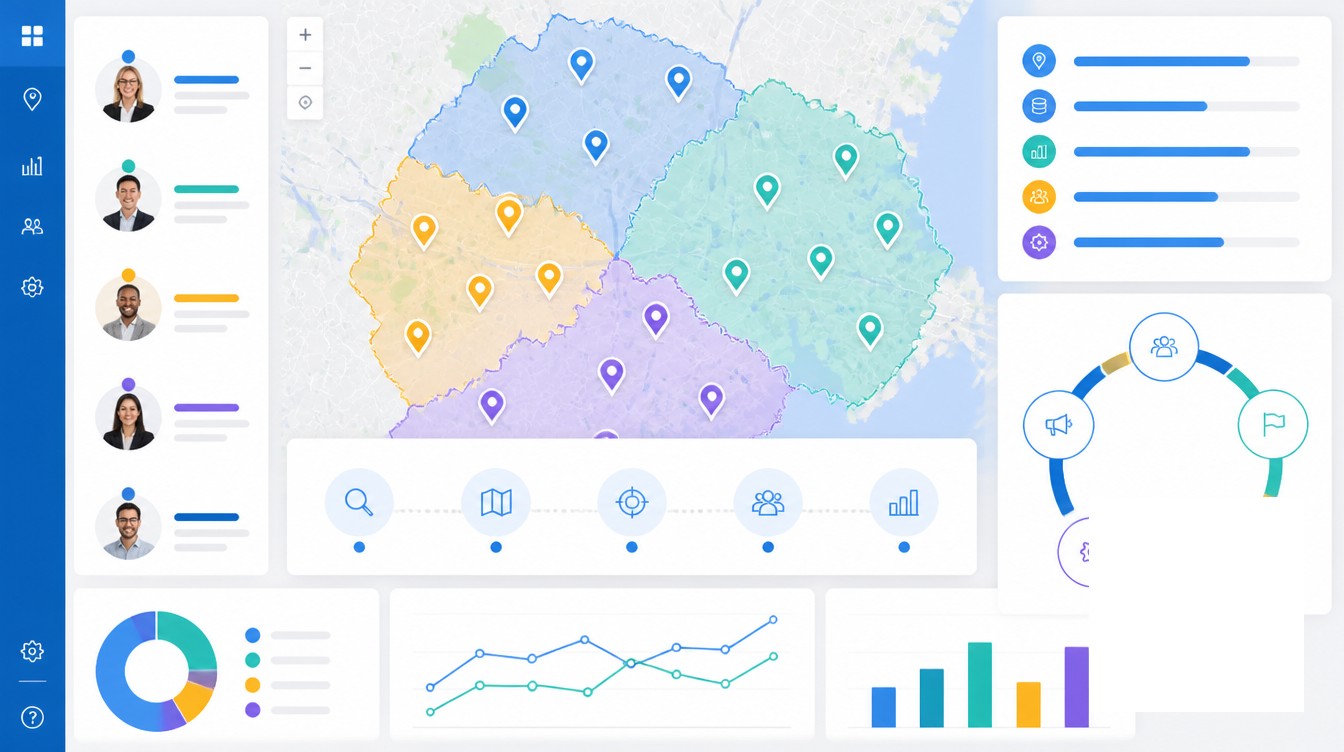

A strategy also names its sponsorship structure. The widely used geospatial-program model identifies three sponsorship roles. A champion advocates for the value internally. An executive sponsor secures the resources and funding. A technical sponsor owns sustainable implementation, usually a geospatial technical director or LI program owner. The champion is rarely senior enough to sign the budget, and the technical lead is rarely positioned to remove interdepartmental obstacles. The executive sponsor sits between the two and unblocks both.

Use Case Discovery and Data Audit

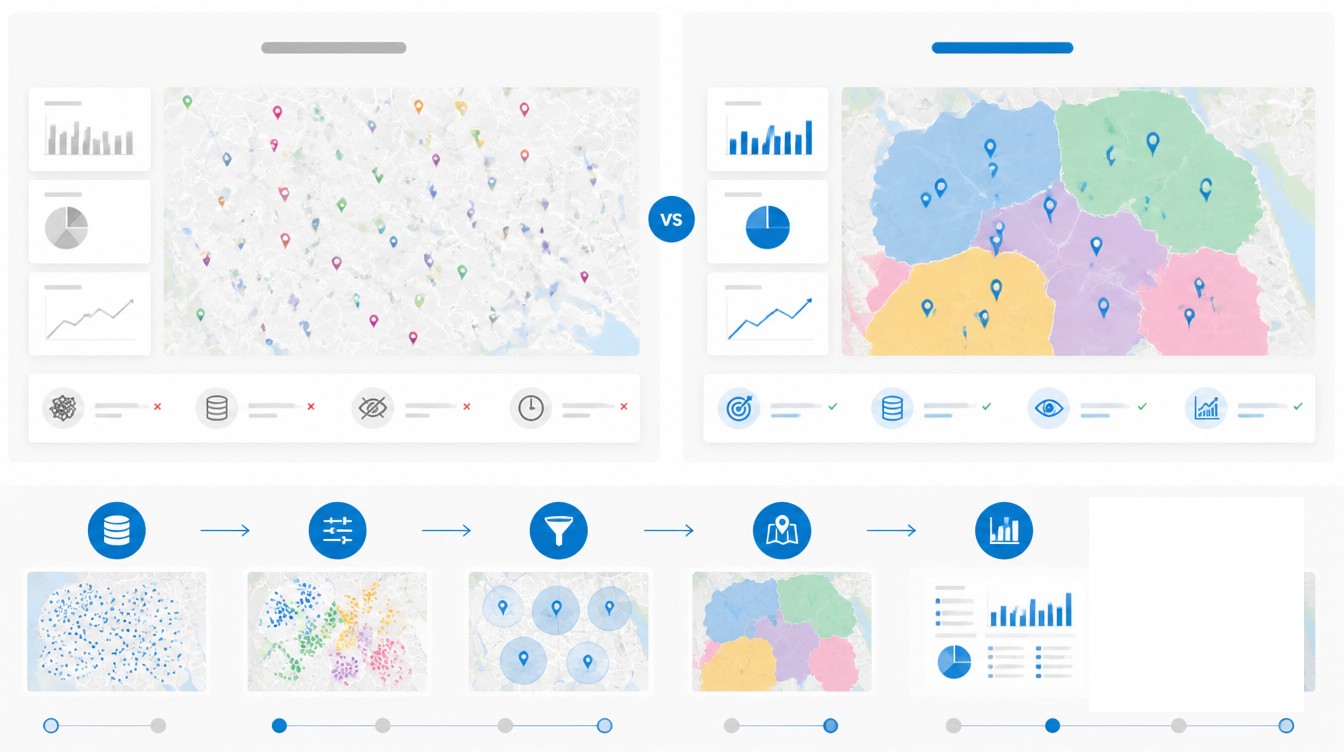

The first two steps of an LI program decide what gets built and what raw material is available to build it with. Skipping either step almost always produces a tool selection that solves the wrong problem.

Identify the Business Questions Location Can Answer

Excellent data analysis starts with questions, not with datasets. Use case discovery is the structured workshop that surfaces those questions before any platform is shortlisted. A standard workshop runs four or more hours with five to twelve participants drawn from business leadership, IT, product owners, and the functional departments that will use the output. The workshop deliverables are an information context map, a data availability and quality snapshot, and a prioritized roadmap with named owners and timelines.

The discipline of the workshop is keeping the conversation on what the business problem is, without prematurely interjecting how the problem will be solved. Common high-value questions surfaced in these sessions include which territories are over- or under-served relative to opportunity, which proposed store sites have the highest probability of meeting AUV targets, which delivery routes can absorb a 15% volume increase without overtime, and which insurance policies sit inside an emerging wildfire perimeter. Each question implies different data inputs and different analytical depth, which is why the workshop precedes the tooling decision.

Audit Existing Location Data

The audit measures what the organization already owns, in what state, and what it would cost to remediate. Roughly 20% of addresses entered online contain errors. Without validation, 20% bad addresses produce 20% bad geocodes, including geocodes that resolve to the wrong building, the wrong block, or no real location at all. A spatial model needs an approximately 85% match rate to be statistically reliable, which puts address scrubbing on the critical path for any predictive use case.

The audit should test four properties for every location dataset in scope, namely positional accuracy, thematic accuracy, completeness, and logical consistency. These are the spatial-data quality elements defined in ISO 19157:2013, and they map directly to the failure modes that derail LI projects later. Geocodability is sometimes used as a quality proxy, with the caveat that an address can be correct and not geocodable, or geocodable and not accurate at the rooftop level. Both errors need to be caught in the audit, not in production.

Data Inputs, Geocoding, and Governance Standards

Once the questions and the gaps are documented, the program selects its data inputs and the geocoding standard that will normalize them. These two steps set the accuracy ceiling for every downstream model.

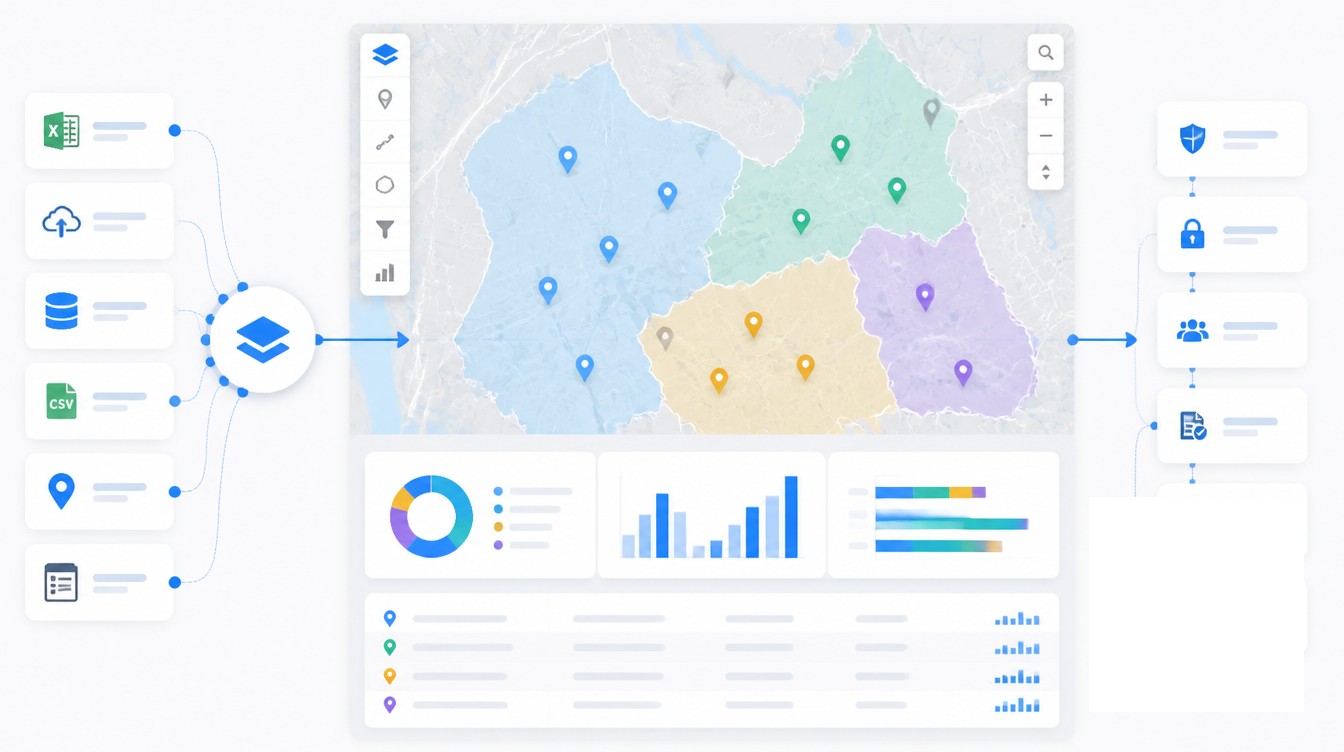

First-Party and Third-Party Inputs

First-party data is the material the organization already produces, including customer records in the CRM, transactions in the ERP, store and asset coordinates in operational systems, and IoT telemetry from vehicles or sensors. These are the highest-trust inputs and usually the cheapest to license. The strategy should require that all first-party location records pass through a single geocoding pipeline rather than each system geocoding independently, which is how the same customer ends up at two different rooftops.

Third-party data fills the gaps the first-party record cannot fill. Common categories include U.S. Census demographics joined at the block-group level, points-of-interest and foot-traffic feeds, weather and climate data, traffic and mobility data, and parcel or boundary files. Buyers should be cautious with mobile-derived datasets. Peer-reviewed research published in 2023 documented that mobile-location panels show measurable underrepresentation bias for Hispanic populations, low-income households, and people with low formal education, with bias that varies across geography and time. A demographic model built on a panel with that bias will misallocate inventory, miscalibrate site scores, and produce equity exposure that a privacy review will surface eventually.

Geocoding Accuracy and Address Standards

The de facto address standard in the United States is USPS CASS certification, which scores delivery-point, ZIP, and ZIP+4 accuracy. Rooftop geocoding accuracy varies across major online services. A 2023 Springer study comparing the leading engines reported match rates ranging from 51% to 83%, with median positional errors between 3 and 14 meters. Specialized commercial address-verification vendors report rooftop accuracy as high as 97%. The differences matter when the use case is route optimization or insurance underwriting, where a fifty-foot error places a building on the wrong side of a flood line.

Address scrubbing, the standardization step that fixes punctuation, abbreviations, suite formatting, and missing components, runs before geocoding. The governance document should name the canonical scrubbing tool, the geocoder, the required match-rate threshold, and the escalation path for records that fail. Without those four entries written down, every analyst silently picks their own answer.

Tooling Choices, KPIs, and Pilot Selection

Tooling is the step organizations want to do first and should do fourth. The right platform depends on who will use it and what question it has to answer. A 2024 industry comparison of spatial-processing platforms placed six options in distinct tiers, including PostGIS for transactional workloads under 100GB, cloud data warehouses such as Snowflake and BigQuery for light spatial enrichment inside a wider analytics stack, Databricks for vector spatial joins on a lakehouse, Apache Sedona for self-managed open-source distributed processing, and managed raster and vector engines for production work at scale. Snowflake exposes 19 native H3 functions and more than 60 spatial SQL functions but no raster, 3D, or topology support. BigQuery has spatial functions but no R-tree indexes. Each ceiling matters when the use case grows.

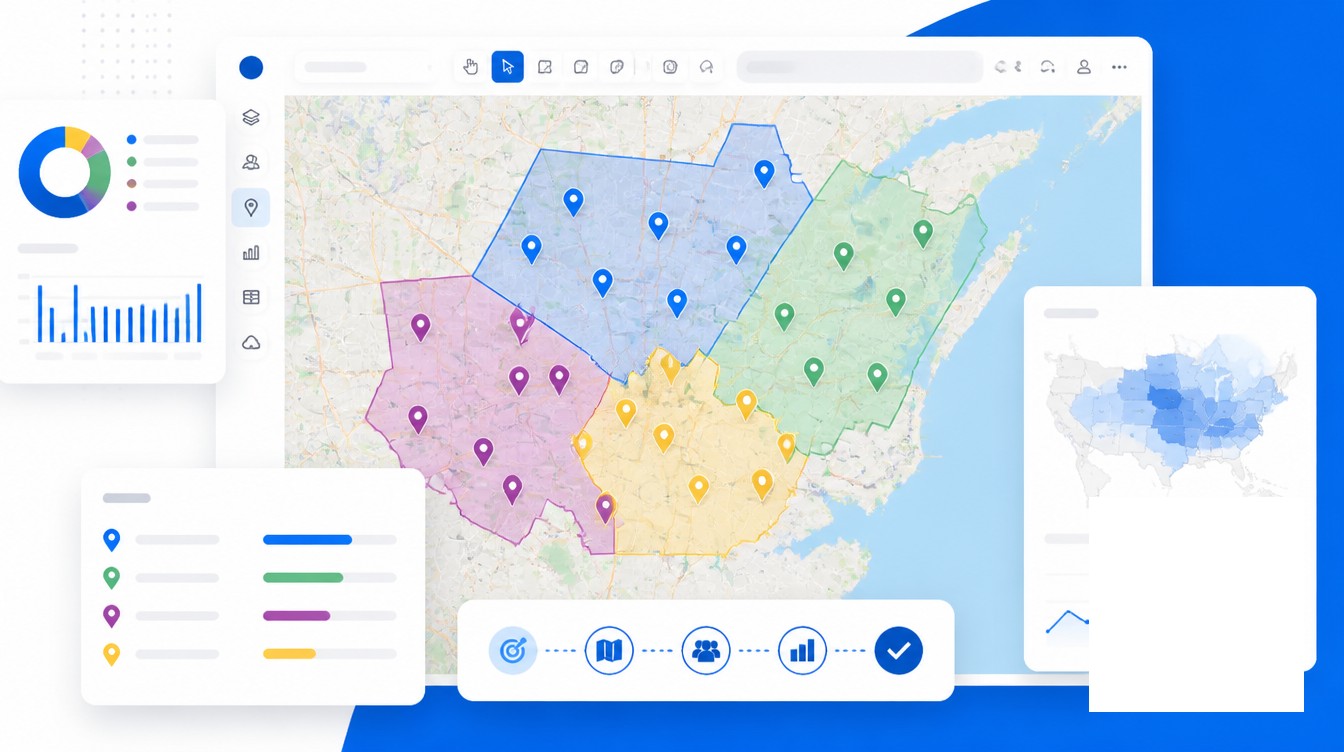

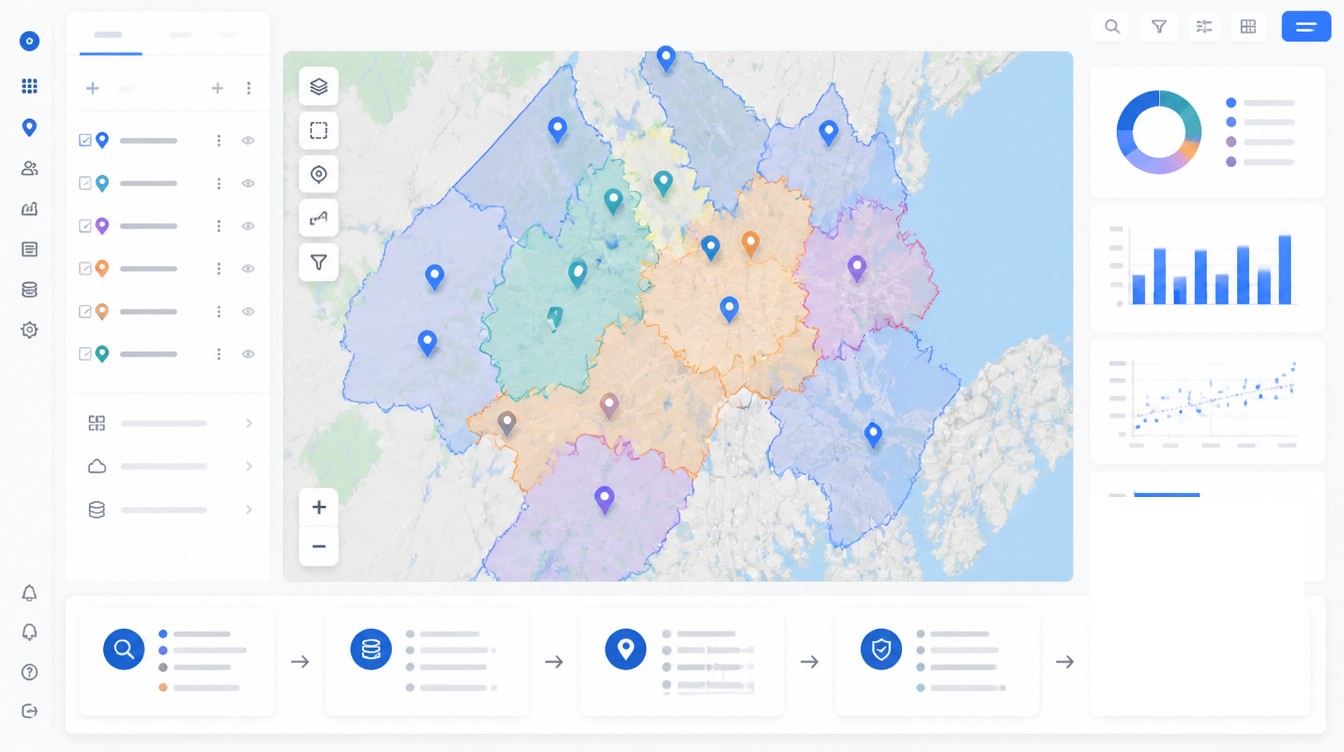

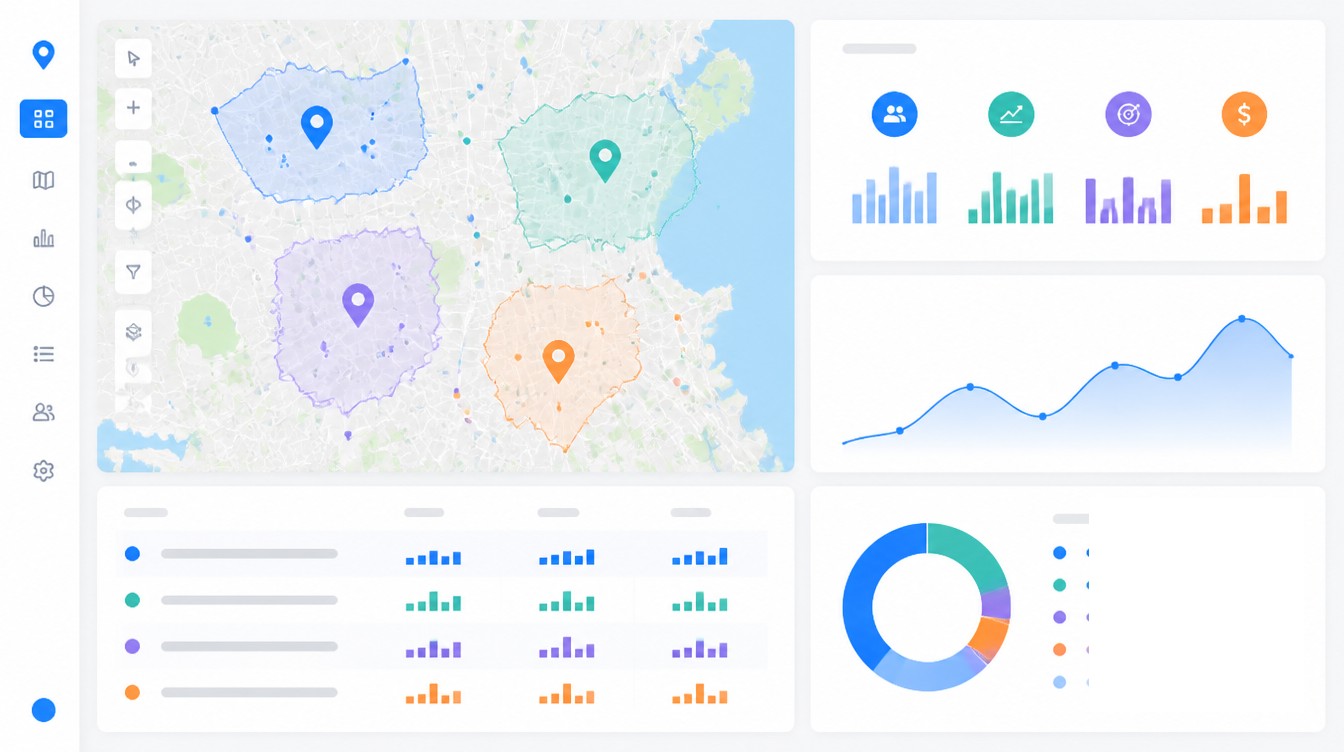

For non-technical users, business mapping tools sized for sales, operations, and field teams hit the right tier. For data scientists, spatial SQL on the warehouse keeps spatial work close to the rest of the analytics environment. For full GIS analysts, desktop GIS retains its place. Forcing enterprise GIS onto non-GIS users produces shelfware and an adoption problem that no training plan recovers from.

KPI definition belongs before deployment, not after. Each pilot use case needs a measured baseline. The metrics that recur in LI reporting are routing and fuel cost reduction, revenue lift from territory optimization, planning cycle time, site-selection accuracy, and average unit volume of new locations opened with structured LI versus gut feel. Maptive customers report 18% fuel cost reductions, 15% revenue growth from territory optimization, and 75% faster planning cycles, with quarterly savings above $100,000 at the larger end of the user base.

Pilot selection narrows the work. A 90-day pilot is the credibility threshold for an executive sponsor expecting proof rather than promises. Pilot execution usually runs four to six weeks of dedicated team time, with the remaining window allocated to measurement and stakeholder review. The pilot use case should be visible enough to matter, narrow enough to finish, and instrumented well enough to produce a defensible before-and-after number. Documentation of failures, integration friction, and user feedback is as load-bearing as the success metric, because the next pilot inherits those lessons.

Roles, Skills, and Change Management

A location intelligence program is cross-functional by construction. Assuming it is a one-person job is the second-most-cited cause of program stall, after starting with the tool.

Core Roles in an LI Program

The reference staffing model includes five roles. The executive sponsor secures funding and political cover. The LI program owner, sometimes titled geospatial technical director, sets the roadmap, manages staff and budget, and serves as the primary liaison to other IT divisions. A GIS analyst or data engineer handles pattern discovery, model construction, and the map design behind the dashboards. A business analyst translates business questions into spatial requirements, which is the bridge that prevents technical work from drifting away from the original use case. A change management lead owns adoption, communication, and the political work of normalizing spatial thinking inside departments that have not previously used it.

Skills Gap and Training Investment

The global GIS training and certification market is projected to reach $1.2B by 2027 at an 18.3% CAGR. The demand profile has moved toward what industry hiring reports call geocomputational skills, the combination of geography fundamentals with software engineering and data science. Supply has not kept up. The geospatial skills shortfall in Australia alone was estimated at $3.1B annually in 2024, with a 1,400-professional gap. North American figures vary by source, but the directional finding is the same. A serious LI program budgets for internal training, certification reimbursement, and at least one senior outside hire, because the labor market does not currently fill the role from inventory.

Integration with CRM, BI, and Data Platforms

The fastest way to kill LI adoption is to make users leave their primary workflow to consult a map. Integration paths into CRM and BI tools include REST API, webhook, native connector, and CSV. Choose the path that matches the internal skill set rather than the path the vendor markets hardest. Native CRM connectors are now standard for major LI platforms, with bidirectional sync to the leading sales and marketing CRMs in use across mid-market and enterprise teams. Refresh latency in the 90-second range is realistic for current platforms, which is fast enough that field reps see updated territory boundaries within the same session.

Privacy, Compliance, and Iteration

The final two steps of the framework close the loop. Step ten is governance and compliance. Step eleven is iteration.

Governance has moved from a documentation exercise to a regulatory requirement. California’s CCPA/CPRA defines precise geolocation as any data identifying a person within an 1,850-foot radius and classifies it as sensitive personal information. Consumers can direct a business to use it only for the limited purposes necessary to deliver a requested service. The GDPR treats geolocation as personal data and requires explicit consent before collection or processing, with a narrow security-purpose carve-out. The FTC’s 2024 and 2025 enforcement record sets the floor with an order against X-Mode and Outlogic in January 2024, parallel orders against Mobilewalla and Gravy Analytics with Venntel in December 2024, and the finalized Mobilewalla order in January 2025. In March 2025 California Attorney General Rob Bonta announced an investigative sweep into location-data practices among mobile apps, ad networks, and brokers. AB 1355, introduced February 21, 2025, would extend the existing CCPA framework with additional restrictions on the commercial use of precise location data.

The operational implications are concrete. The governance document needs a written purpose limitation for each location dataset, a consent record for any data sourced from a mobile or third-party broker, a retention schedule, a deletion workflow, and a contractual flow-down covering third-party data sharing with vendors. A privacy review fits inside step ten of the framework, not after the platform is purchased, because retrofitting consent is more expensive than scoping for it at the start.

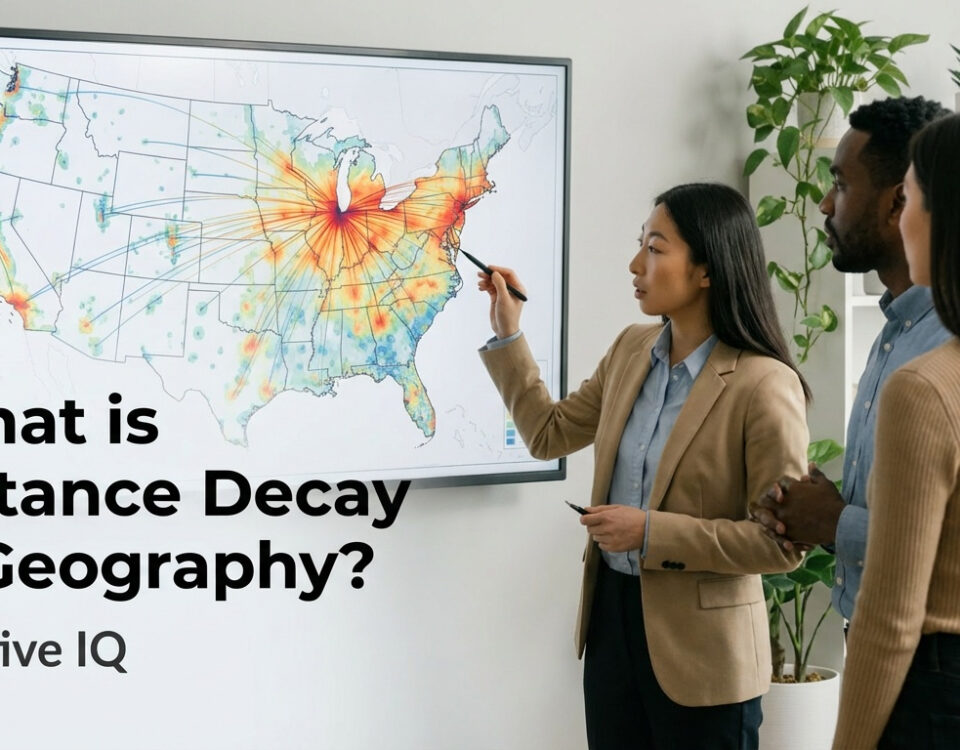

Iteration is the eleventh step and runs continuously after launch. Stakeholder feedback gets gathered on a defined cadence, KPIs are re-baselined as use cases mature, and the program advances up a five-level spatial-analytics maturity ladder that runs from descriptive mapping through diagnostic spatial analysis, predictive geospatial modeling, prescriptive optimization, and autonomous decisioning. Public-sector programs benchmark themselves against the NSGIC Geospatial Maturity Assessment or the URISA GIS Capability Maturity Model. Commercial programs have fewer standardized benchmarks but can apply the same ladder, with year-over-year movement up one rung as a defensible internal target. The strategy document gets reviewed at the same cadence, because the regulatory baseline keeps moving and the data inputs keep expanding.

Frequently Asked Questions

What is the difference between location intelligence and GIS?

GIS is the underlying technology stack for capturing, storing, and analyzing spatial data. Location intelligence is the business outcome produced when GIS, geospatial data, AI, and BI are combined for a specific business question. A GIS team can exist without an LI strategy. An LI strategy without a GIS or equivalent spatial-processing capability has no engine.

What is the location intelligence maturity model?

The common five-stage ladder tracks the general analytics maturity model. The five stages run from descriptive mapping (what happened, where), through diagnostic spatial analysis (why here), predictive geospatial modeling (what will happen here), prescriptive optimization (what should we do, where), and autonomous decisioning with real-time spatial-AI loops. Public-sector frameworks like the NSGIC Geospatial Maturity Assessment and the URISA GIS Capability Maturity Model provide formal assessment instruments.

How long does it take to implement a location intelligence program?

Typical timelines run a 30 to 45 day proof of concept, a 90-day pilot to prove ROI, a 6 to 12 month phased rollout of the first scaled use case, and a multi-year climb through the five-level maturity ladder. The 90-day pilot is the standard credibility threshold for executive buy-in.

How much does location intelligence software cost?

Entry-level business mapping platforms run $200 to $500 per month, roughly $2,400 to $6,000 per year. Mid-market platforms with foot-traffic and predictive analytics range from $1,000 to $5,000 per month. Enterprise GIS suites with dedicated support typically cost $10,000 to $100,000 or more per year, with full enterprise programs frequently exceeding $250,000 annually once data and services are included.

Who should own a location intelligence program?

A successful program needs a champion who advocates for the value, an executive sponsor who provides resources and funding, and a technical sponsor who manages the roadmap, staff, and budget. Cross-functional teams usually include a GIS analyst, a business analyst, a data engineer, and a change management lead.

What is location data governance?

Location data governance is the set of policies, ownership rules, and quality standards that control how addresses, coordinates, and spatial datasets are created, validated, stored, accessed, and retired. It covers geocoding standards such as USPS CASS certification, address scrubbing, ISO 19157 spatial-data quality elements, and privacy compliance for sensitive location data.

Why does address data quality matter for location intelligence?

Around 20% of addresses entered online contain errors, and those errors translate directly into bad geocodes, sometimes placing locations miles from where they actually are. A spatial model usually needs around an 85% match rate to be statistically reliable, which makes scrubbing and CASS-style standardization a prerequisite for any serious LI program.

What is precise geolocation under CCPA?

California’s CCPA and CPRA define precise geolocation as any data identifying a person’s location within a radius of 1,850 feet, including GPS coordinates. It is classified as sensitive personal information, and consumers have the right to direct businesses to use it only for the limited purposes necessary to provide a requested service.

Does GDPR apply to location data?

Yes. The GDPR treats geolocation data as personal data and requires explicit user consent before it is collected or processed, unless processing is justified by another legitimate basis such as a clearly defined security purpose. EU residents must be able to withdraw consent and exercise data-subject rights over their location information.

What enforcement actions has the FTC taken on location data?

Since January 2024 the FTC has issued orders against X-Mode Social and its successor Outlogic (the first FTC prohibition on selling precise location data), Mobilewalla in December 2024 (including the first ban on collecting consumer data from real-time bidding exchanges), and Gravy Analytics with Venntel in December 2024. The Mobilewalla order was finalized in January 2025, marking the FTC’s fifth action targeting sensitive location-data handling.

How do you measure ROI for a location intelligence program?

Define a baseline KPI for each pilot use case before tooling goes live, then compare post-deployment performance. Common metrics include fuel and routing cost reduction, revenue lift from territory optimization, planning cycle time, site-selection accuracy, and average unit volume of new locations opened with structured LI versus gut feel.

How do you evaluate location intelligence vendors?

A pre-selection checklist should cover use case clarity, ownership of the underlying coordinates, internal skill set, integration path, and budget structure. Technical evaluation criteria include data accuracy and freshness, geographic coverage, analytical depth, ease of integration with CRM and BI, privacy compliance, ease of use for non-GIS users, pricing transparency, total cost of ownership, and vendor support.